|

If you still facing the problem then reinstall the Airflow and refer this link for the installation of Apache Airflow. In this file change dags_folder path to DAG folder that we have created.

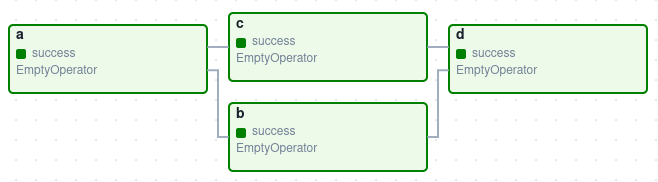

Importing timedelta will help us regulate a timeout interval in the occurrence of our DAG taking too long to run (Airflow best practice). You can create DAG file anywhere on your system but you need to set the path to that DAG folder/directory in Airflow's config file.įor example, I have created my DAG folder in the Home directory then I have to edit airflow.cfg file using the following commands in the terminal:Ĭreating a DAG folder at home or root directory $mkdir ~/DAGĮditing airflow.cfg present in the airflow directory where I have installed the airflow ~/$cd airflow The first import allows for DAG functionality in Airflow, and the second allows for Airflow’s Python Operator, which we’ll use to initiate the e-mail later on. Check the path set to the DAG folder in Airflow's config file. The following image shows that the DAG datasetdependentexampledag runs only after two different datasets have been updated. View the Airflow web server log group in CloudWatch Logs, as defined in Viewing Airflow logs in Amazon CloudWatch. In the Airflow UI, the Next Run column for the downstream DAG shows dataset dependencies for the DAG and how many dependencies have been updated since the last DAG run. Docker: To run a DAG in Airflow, you'll have to install Apache Airflow in your local Python environment or install Docker on your local machine. In Airflow workflows are defined as Directed Acyclic Graph (DAG) of tasks. Data Pipelines with Apache Airflow - Knowing the Prerequisites. Airflow is an open source platform for programatically authoring, scheduling and managing workflows. Note that many of the times the name given may be the same as the already present name in the list of DAGs (since if you copied the DAG code). Upload Apache Airflows tutorial DAG for the latest Amazon MWAA supported Apache Airflow version to Amazon S3, and then run in the Apache Airflow UI, as defined in Adding or updating DAGs. This Apache Airflow tutorial will show you how to build one with an exciting data pipeline example. Check the Dag name given at the time of DAG object creation in the DAG python program dag = DAG( See the Airflow tutorial and Airflow concepts for more information on defining. The following code snippets show examples of each component out of context. Is there some other condition that neededs to be satisfied for airflow to identify a DAG and load it?ġ. An Airflow DAG is defined in a Python file and is composed of the following components: A DAG definition, operators, and operator relationships. rw-r-r- 1 airflow airflow 1645 Aug 6 17:03 custom_example_bash_operator.pyĭrwxr-xr-x 2 airflow airflow 4096 Aug 6 17:08 _pycache_ The output shows INFO - Filling up the DagBag from /usr/local/airflow/dagsīut this doesn't include the dags in /usr/local/airflow/dags ls -la /usr/local/airflow/dags/ĭrwxr-xr-x 3 airflow airflow 4096 Aug 6 17:08.

Airflow seems to be skipping the dags I added to /usr/local/airflow/dags. Steps To Create an Airflow DAG Step 1: Importing the right modules for your DAG In order to create a DAG, it is very important to import the right.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed